Verizon: Conversational AI Before the GenAI Wave

Role: Product Manager · 2016–2022

Note: To comply with NDA obligations, I have omitted and obfuscated confidential information. The views expressed here are my own and do not necessarily reflect those of my employer.

Starting in the deep end

I joined Verizon’s Open Innovation Lab in 2016, fresh out of Parsons School of Design. The lab was a six-month residency where small teams researched new product opportunities and built functional prototypes within the constraints of the business. My focus was chatbots, and by the end we were pitching directly to leadership across the company.

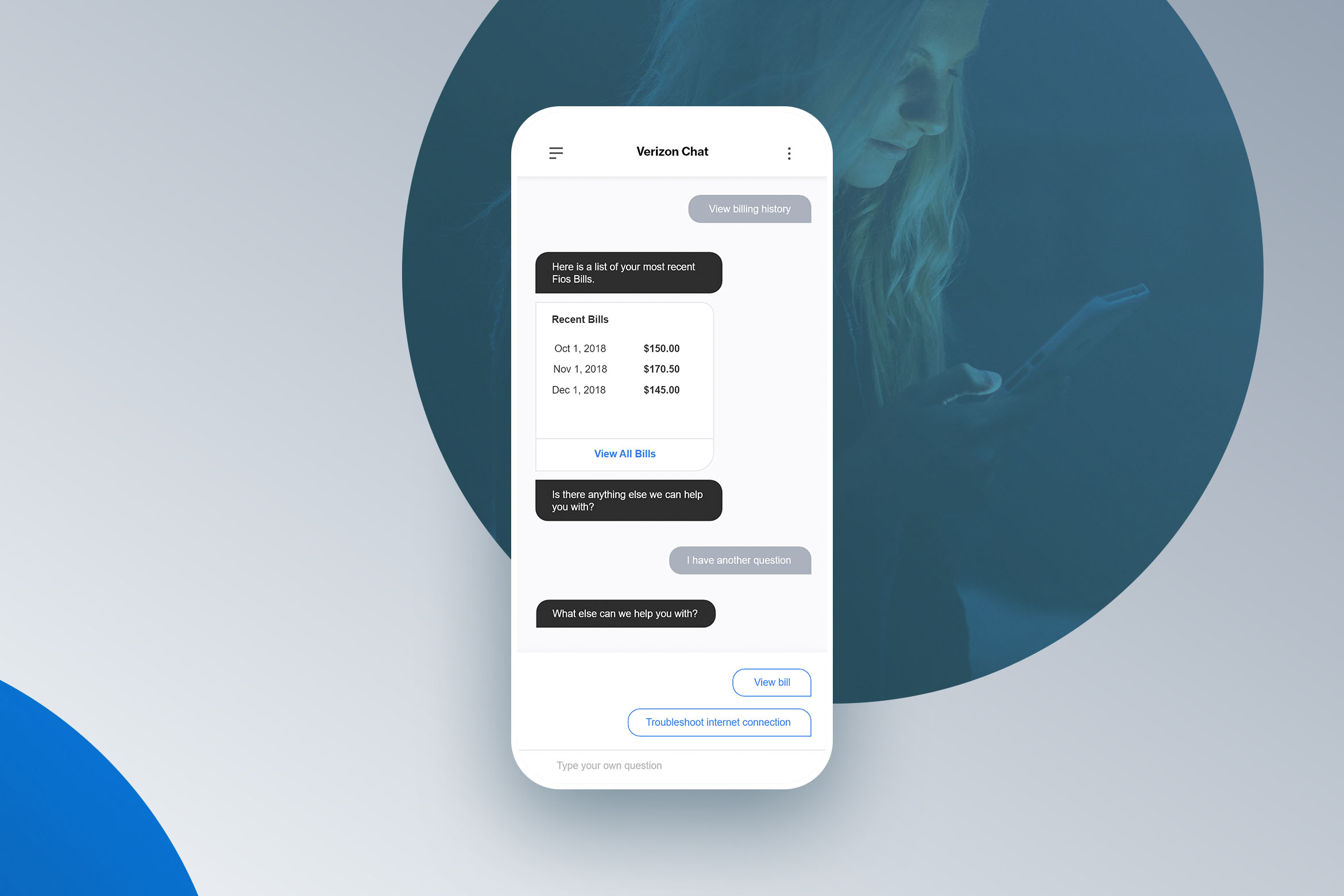

That residency turned into a six-year run on the Omni Customer Experience team, where I led conversational commerce strategy across all of Verizon’s customer-facing channels: mobile apps, websites, social media, SMS, and business messaging. The scale was staggering. Over 150 million customers, and every one of them deserved a support experience that didn’t feel like talking to a wall.

Learning what customer empathy actually means

Everyone in product talks about empathy. In the chatbot world, it’s easy to be bad at it. Early on, I realised that most automated experiences fail not because the technology is broken, but because nobody stopped to ask: what just happened to this person before they opened this chat?

That question became the foundation of how I thought about every use case. A customer who just saw an unexpected charge on their bill is in a completely different emotional state than someone browsing new plans. If the bot treats both interactions the same way, you’ve failed the first one. At Verizon’s scale, neglecting even 1% of users means thousands of frustrated people who trusted you to help them.

I pushed for context-aware experiences that considered the customer’s recent journey: what actions they’d taken, what events had occurred, what they likely needed right now. When we detected that someone was dealing with a serious issue, the right move was often to step back and hand off to a live representative for white-glove service. Knowing when not to automate was just as important as knowing what to automate.

The best automated experiences know when to get out of the way.

This also meant getting the tone right. There’s a Goldilocks zone in conversational design where responses don’t come across as cold and robotic, but aren’t trying too hard to be clever either. A brand serving customers of every age and background across the entire country needs to be inclusive and measured. I spent a lot of time with experience designers refining conversation flows, defining personality guidelines, and pressure-testing edge cases. Typography, response pacing, escalation triggers: all of it mattered.

Ahead of the curve on AI

This was 2016 through 2022. GPT-3 launched in 2020, but generative AI as a mainstream product category didn’t exist for most of my time at Verizon. We were building intelligent conversational experiences with the tools available: intent classification, entity extraction, decision trees, and carefully authored flows. It was painstaking, manual work to make a bot feel natural.

But I could see where the technology was heading. The trajectory from rule-based systems to statistical NLU to transformer models was clear if you were paying attention. We started working with industry partners to evaluate and integrate the latest AI models as they became available, positioning Verizon’s conversational platform to adopt generative capabilities before most enterprises were even thinking about it.

What made this possible was that we’d built the right foundation. The platform architecture was designed to be model-agnostic: the conversation orchestration layer, the context engine, the channel abstraction. When better AI models arrived, we could plug them in without rebuilding the customer experience from scratch. That foresight, building for a future we could see coming even if we couldn’t name it yet, is something I’m proud of.

We were solving for a generative AI future with pre-generative AI tools, and we built an architecture ready for the shift.

What I carried forward

Six years at this scale taught me things I couldn’t have learned anywhere else. I learned that customer empathy isn’t a design exercise; it’s an operational discipline that has to be embedded in every product decision, every edge case, every escalation path. I learned that the best product work at a large enterprise isn’t about building something flashy; it’s about building something that works correctly for millions of people who just want their problem solved.

And I learned to trust my instincts about where technology is going. The conversational AI space has since been transformed by generative models, and the principles we built around (context awareness, tone calibration, knowing when to hand off to a human) turned out to be exactly the right foundation for that new world.